- May 12, 2026

- by Anoop Jain

MCP Hit 97 Million Installs. Your Team Has Zero Engineers Who Know What That Means

Ninety-seven million. That’s how many times the Model Context Protocol SDK was downloaded in a single month — March 2026. Not cumulative. Monthly. It launched at roughly 2 million downloads in November 2024. Sixteen months later, it’s at 97 million. For context, Kubernetes — now considered foundational cloud infrastructure — took nearly four years to reach comparable adoption density.

MCP is the fastest-adopted protocol in AI history. Every major AI provider on the planet supports it. Claude. ChatGPT. Gemini. Copilot. Cursor. VS Code. JetBrains. The Agentic AI Foundation — co-founded by Anthropic, OpenAI, and Block, with AWS, Google, Microsoft, Cloudflare, and Bloomberg as platinum members — now governs it under the Linux Foundation. It sits alongside HTTP, Kubernetes, and PyTorch as infrastructure owned by the industry, not by any single company.

And here’s the part that should concern you: 78% of enterprise AI teams report at least one MCP-backed agent in production. 67% of CTOs surveyed name MCP their default agent integration standard within twelve months. More than 80% of Fortune 500 companies are deploying active AI agents in production workflows — and the majority of those agents connect to tools via MCP.

Now walk down the hall to your engineering floor and ask a simple question: “Who on this team has built an MCP server?”

The silence you hear is the problem this blog is about.

What MCP Actually Is (And Why It Matters More Than You Think)

Before we talk about the talent gap, let’s make sure we’re speaking the same language — because the biggest risk right now isn’t MCP ignorance. It’s MCP misunderstanding.

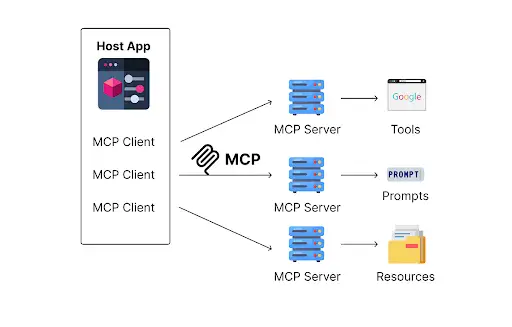

MCP — Model Context Protocol — is an open standard that defines how AI models connect to external tools, data sources, and systems. Think of it as the USB-C of AI. Before USB-C, every device needed its own cable. Before MCP, every time an AI system needed to talk to an external tool — a CRM, a database, a ticketing system — developers built a custom integration. One connector per model, per tool. Five AI models connecting to ten tools meant fifty separate integrations, each requiring unique maintenance.

MCP eliminates that math entirely. Each tool gets one MCP server. Every MCP-compatible AI agent can discover and use it. The calculation shifts from multiplicative to additive. Integration costs drop by 60–70%. Provider lock-in decreases because switching between Claude, GPT, or Gemini becomes a configuration change rather than a rebuild.

But MCP isn’t just a convenience layer. It’s the foundational infrastructure for the agentic AI era. When an MCP server declares a tool, it doesn’t just say “here’s a function.” It provides semantic context — what the tool does, what parameters it needs, what it returns, and when it should be used. This is what allows AI agents to discover tools on demand, evaluate which is appropriate for a given task, and use them intelligently without human instruction at every step.

The public server registry has expanded from 1,200 servers in Q1 2025 to over 9,400 in April 2026 — a 7.8x increase year-over-year. There are 7,800 GitHub repositories carrying the mcp-server topic tag. Over 6,200 npm packages and 2,100 PyPI packages reference the MCP SDK. And that’s just the public ecosystem. 41% of enterprise AI teams have at least one custom internal MCP server that doesn’t appear in any public registry.

This is not a trend. It’s infrastructure. And infrastructure demands engineers who understand it.

The Skills Gap Nobody Planned For

Here’s what makes the MCP talent gap uniquely dangerous: it wasn’t predictable, it arrived fast, and it doesn’t map to any existing job category.

Eighteen months ago, “MCP engineer” wasn’t a role. There were no university courses. No bootcamp certifications. No LinkedIn skill badges. The protocol existed as a spec document with a handful of experimental implementations. The engineers who have real production MCP experience today gained it the only way possible — by building and deploying servers at the frontier, often in the first wave of adoption, at companies that moved before the rest of the market understood what was happening.

That first-mover cohort is small. Very small. And the demand for them is enormous.

The skill set required to build production MCP infrastructure goes well beyond “I read the documentation.” A real MCP integration engineer needs to understand several interconnected domains simultaneously.

Server architecture and semantic design. Building an MCP server isn’t wrapping an API. It’s creating a semantic contract — defining tools with rich descriptions that AI agents can discover, evaluate, and use intelligently. The difference between an MCP server that works and one that agents actually use effectively is the quality of that semantic layer. Engineers need to understand how agents reason about tool selection, how progressive discovery works, and how to design interfaces that are meaningful to AI — not just functional for humans.

Enterprise security and authentication. MCP servers in production environments require OAuth 2.1 with PKCE, SSO integration, role-based access controls, and audit trails. The protocol itself doesn’t fully solve these problems — they need to be engineered on top of it. For regulated industries, this means PCI-DSS, SOC 2, HIPAA, and GDPR-compliant implementations. The security surface is expanding fast, and the OpenSSF has already cataloged over 80 attack techniques specifically targeting tool-based LLM systems.

Progressive discovery and context management. One of MCP’s most powerful patterns — and one of the hardest to implement well — is progressive discovery, where agents load tools on demand rather than consuming context with unnecessary definitions upfront. This is critical for efficiency, especially when agents have access to dozens or hundreds of potential tools. Getting this right requires understanding both the AI side (how models manage context windows and token budgets) and the infrastructure side (how to design servers that expose capabilities incrementally).

Production operations at scale. Current MCP servers must maintain session state, which limits horizontal scaling. The 2026 roadmap addresses this with stateless operation, but enterprises deploying today need engineers who can architect around these limitations — session management, load balancing, failover, monitoring, and the kind of operational resilience that production systems demand.

Governance and compliance infrastructure. As MCP becomes the default integration layer for AI agents, governance becomes an engineering problem. Audit trails, access logging, data lineage, compliance reporting — these aren’t afterthoughts. They’re requirements, especially with the EU AI Act’s compliance obligations beginning in August 2026.

No single bootcamp teaches this stack. No university program produces engineers with this combination of skills. And no amount of “we’ll figure it out as we go” will close the gap fast enough for companies whose competitors are already in production.

What Happens When You Don’t Have MCP Talent

The consequences aren’t theoretical. They’re already playing out across the enterprise landscape.

Your AI agents can’t talk to your systems. The entire promise of agentic AI — autonomous systems that execute multi-step workflows across your business — depends on those agents being able to connect to your tools and data. Without MCP-capable engineers, your agents are isolated. They can reason beautifully, but they can’t do anything that requires touching a database, a CRM, an API, or a document store.

You build fragile point-to-point integrations instead. Without MCP, teams fall back on the old model — custom connectors for each AI-model-to-tool combination. This is expensive to build, expensive to maintain, and creates brittle dependencies that break every time a model updates or an API changes. One production test showed that MCP-based integrations achieved 100% task success rates with compute costs dropping up to 30% compared to custom integration approaches.

Your AI becomes invisible to the ecosystem. If your SaaS product doesn’t have an MCP server, your customers’ AI agents can’t discover or use it. Forrester predicts 30% of enterprise app vendors will launch MCP servers in 2026. Gartner predicts 40% of enterprise applications will include AI agents by year-end. The products that become “agent-ready” in 2026 win the enterprise deals. The ones that don’t spend 2027 explaining to their board why they’re losing RFPs.

You can’t participate in the agent economy. MCP isn’t just about connecting tools. It’s the foundation for an agent economy where specialized AI services interoperate seamlessly. The 2026 roadmap includes agent-to-agent coordination — one AI agent calling another through MCP, creating hierarchical architectures where orchestrator agents delegate to specialized sub-agents. Companies without MCP capability won’t just be behind. They’ll be locked out.

Why Traditional Hiring Can’t Fix This

Let’s do the math on trying to hire your way out of this problem.

Step one: you write a job description for an “MCP Integration Engineer.” Your HR team has never seen this title before. They look it up. There’s no standardized job listing template. There’s no salary benchmark data. The recruiters have no network of MCP specialists because the role barely exists as a recognized category.

Step two: you post the listing. The qualified candidate pool — engineers with genuine production MCP experience, not just people who read the docs — numbers in the low thousands globally. The companies trying to hire them number in the hundreds of thousands. Your listing competes with every other enterprise that’s had the same realization you just had.

Step three: you wait. Four months. Six months. Maybe you get a candidate. Maybe they accept. Maybe they don’t rescind for a better offer during the notice period. If everything goes perfectly — and it rarely does — you have one MCP engineer starting roughly six months from now, needing another month or two to understand your specific architecture and data landscape.

Meanwhile, MCP’s ecosystem is growing at 18% month-over-month. The server registry is projected to cross 12,000 by July 2026 and 18,000 by year-end. Your competitors who staffed for MCP three months ago are already shipping agent-connected products.

The hiring timeline and the technology timeline are fundamentally incompatible. You cannot recruit for the frontier at the speed of 1990s HR processes.

The Augmentation Play: How the Fastest Companies Are Staffing for MCP

The companies that are actually deploying MCP-connected agentic systems in production right now — the ones shipping while others are still drafting job descriptions — are using AI staff augmentation. And the pattern is consistent.

They identify the specific MCP capability they need: a server connecting AI agents to their internal CRM. An MCP integration layer for their data warehouse. A secure, governance-compliant server architecture for a regulated environment. An agent-to-tool connectivity layer for their new agentic customer service system.

They engage a staffing partner — like gNxt Systems — that maintains an active network of engineers with documented MCP production experience. Not engineers who’ve read the spec. Engineers who’ve built servers, deployed them in enterprise environments, handled the authentication and governance challenges, and operated them at scale.

Within one to two weeks, that specialist is onboarded into their team. Same standup. Same codebase. Same tools. Same code review process. Writing production MCP infrastructure from day one — because they’ve done exactly this work before, at other companies, in other industries.

And critically, they’re transferring knowledge as they build. Pair programming with internal engineers. Documenting architecture decisions. Creating operational runbooks. Running structured handoff sessions. So when the engagement ends — typically 60 to 120 days for MCP-specific work — the internal team doesn’t just have a working system. They have the understanding to maintain, extend, and evolve it.

This is how you close a skills gap that the hiring market can’t fill. Not by waiting for the talent pool to grow. Not by hoping your existing team can self-teach from documentation. But by embedding someone who’s already at the frontier and letting them pull your team forward.

What Your MCP Roadmap Should Look Like

If you’re starting from zero MCP capability — and there’s no shame in that, given how new this is — here’s the sequence that the fastest-moving companies are following.

Phase 1 (Weeks 1–4): Foundation. Engage an augmented MCP specialist to audit your current AI architecture, identify the highest-value integration points, and design the MCP server architecture. This phase produces a technical blueprint and a prioritized implementation roadmap.

Phase 2 (Weeks 5–12): Build. Build and deploy your first production MCP servers — typically starting with the one or two integrations that unlock the most value for your agentic AI initiatives. Simultaneously, the augmented specialist begins pair programming with internal engineers, establishing the knowledge transfer that makes the rest of the roadmap self-sustaining.

Phase 3 (Weeks 13–16): Harden and Handoff. Security review, load testing, governance implementation, and the structured handoff that transfers full operational ownership to your internal team. By the end of this phase, your team can independently build, deploy, and maintain MCP servers.

Phase 4 (Ongoing): Scale. With the foundation in place and the knowledge transferred, your internal team extends the MCP layer across your enterprise — adding servers for additional tools, implementing progressive discovery patterns, and evolving the architecture as the protocol matures.

Total timeline from zero MCP capability to production deployment with internal ownership: roughly four months. Total timeline if you try to hire a full-time MCP engineer through traditional channels: roughly the same four months just to get the hire started — with no guarantee of a successful search.

The Window Is Now

MCP isn’t emerging. It has emerged. Ninety-seven million monthly installs. 10,000+ public servers. 78% of enterprise AI teams with at least one MCP-backed agent in production. Every frontier AI lab. Every major IDE. Every enterprise cloud platform. Governed by the Linux Foundation. Growing at 18% month-over-month.

The companies that build MCP capability now are laying the integration infrastructure that their agentic AI systems will run on for years. The companies that wait are building on sand — custom integrations that will need to be rebuilt, agent architectures that can’t scale, and a growing gap between what their AI could do and what their team can actually connect it to.

The protocol wars are over. MCP won. The only remaining question is whether your team can build with it.

If the answer is “not yet” — that’s fixable. But not by waiting.

References & Sources

- Digital Applied — “MCP Adoption Statistics 2026: Model Context Protocol” (April 2026) — https://www.digitalapplied.com/blog/mcp-adoption-statistics-2026-model-context-protocol

- AI2Work — “Model Context Protocol Hits 97M Installs as Linux Foundation Takes Over” (April 2026) — https://ai2.work/blog/model-context-protocol-hits-97m-installs-as-linux-foundation-takes-over

- Effloow — “MCP Ecosystem in 2026: From Experiment to 97 Million Installs” (March 2026) — https://effloow.com/articles/mcp-ecosystem-growth-100-million-installs-2026

- Bonjoy — “What Is MCP — Model Context Protocol for Enterprise AI” (April 2026) — https://bonjoy.com/articles/what-is-mcp-model-context-protocol-enterprise-guide/

- Knak — “MCP Adoption in 2026: What Marketers Need to Know” (March 2026) — https://knak.com/blog/mcp-adoption-in-2026-what-marketers-need-to-know/

Frequently Asked Questions (FAQs)

Q1. What is MCP (Model Context Protocol) and why is it important for enterprise AI?

Q2. How many companies are already using MCP in production?

Q3. Why is it so hard to hire MCP engineers?

Q4. Can we just train our existing engineers on MCP?

Q5. How quickly can gNxt Systems deploy an MCP specialist?

About Author

CEO at gNxt Systems

with 25+ years of expertise, Mr. Anoop Jain delivers complex projects, driving innovation through IT strategies and inspiring teams to achieve milestones in a competitive, technology-driven landscape.