- March 24, 2026

- by Anoop Jain

Hiring for RAG Pipelines? Here’s What Enterprises Get Wrong

Retrieval-Augmented Generation has quickly become one of the most important architectures in enterprise Generative AI. For organizations building AI copilots, enterprise search systems, internal knowledge assistants, customer support automation, and domain-specific question-answering tools, RAG offers a practical way to connect large language models to proprietary business data. It helps improve contextual accuracy, reduce hallucinations, and make GenAI applications more useful in real-world enterprise settings.

That promise has made RAG pipelines a major priority for enterprises across industries. But as interest in RAG has grown, so has a pattern of hiring mistakes that slows down delivery and weakens outcomes.

Many enterprises still approach RAG hiring as if it were a simple model-layer problem. They assume that finding one “GenAI engineer” or one “LLM expert” will be enough to build, deploy, and manage a reliable RAG system. In reality, RAG is not a single-role problem. It is a layered engineering problem that spans data ingestion, search relevance, vector infrastructure, backend integration, LLM orchestration, observability, governance, and production operations.

This gap between perception and reality is exactly why so many enterprise RAG initiatives struggle. The architecture may look deceptively simple on slides, but the actual execution demands far more coordination than many hiring plans account for. If enterprises want RAG systems that perform reliably in production, they need to stop hiring for the headline term and start staffing for the underlying capability stack.

Why RAG Pipelines Are More Complex Than They Look

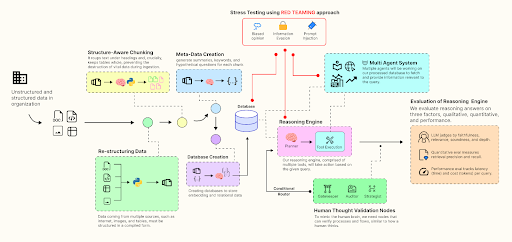

At first glance, RAG appears straightforward. Enterprise documents are ingested, converted into embeddings, stored in a vector database, retrieved when a user enters a query, and then passed into an LLM to generate a grounded answer. Conceptually, it feels elegant and manageable.

But production-grade RAG is far more demanding than this simplified explanation suggests.

A RAG pipeline does not succeed merely because a model is strong. Its quality depends heavily on what happens before the model ever generates an answer. The retrieval layer must identify the right content. The data layer must ensure documents are current, clean, and structured correctly. The application layer must deliver responses in a secure, performant, and auditable way. Small mistakes in any of these layers can significantly reduce trust in the system.

For example, a weak chunking strategy can separate related ideas and destroy contextual meaning. Poor metadata design can prevent accurate filtering and permission control. Inadequate ingestion logic can allow outdated or duplicate documents to dominate retrieval. A flawed reranking mechanism can surface irrelevant content. All of this can happen even when the LLM itself is powerful.

This is why enterprises that treat RAG as a lightweight enhancement often underestimate the engineering effort required. A working prototype can be built quickly. A reliable enterprise system cannot.

The First Mistake: Hiring for a Buzzword Instead of a Capability

One of the most common mistakes enterprises make is creating hiring plans around fashionable terminology rather than actual system requirements. They search for candidates with “RAG,” “LLM,” or “GenAI” on their resumes and assume that exposure to those terms indicates readiness for production work.

That assumption often leads to poor hiring outcomes.

Someone who has built a basic chatbot that retrieves answers from a handful of static documents may still have no experience with real ingestion pipelines, retrieval tuning, observability, or permission-aware architecture. Another candidate may have strong prompt engineering skills but no practical understanding of vector indexing, enterprise search behavior, or backend service integration. A machine learning engineer may understand models well but have limited exposure to the operational challenges that emerge when enterprise users rely on the system daily.

What enterprises actually need is capability-based hiring. That means evaluating whether the team can cover the specific functions that determine RAG performance in production.

These functions often include:

- Document ingestion and transformation

- Chunking strategy and embedding design

- Vector search and retrieval tuning

- Prompt orchestration and answer grounding

- Backend integration and API reliability

- Monitoring, evaluation, and cost optimization

- Security, access control, and governance

When organizations hire for labels, they build fragile teams around assumptions. When they hire for capabilities, they create systems that are more likely to survive real-world complexity.

The Second Mistake: Treating RAG as a Single-Person Role

Another major mistake is assuming that one “AI engineer” can own the full RAG lifecycle from ingestion to production operations. This expectation is common in early-stage hiring discussions, especially when enterprises are just beginning their GenAI journey. The thinking often goes like this: if a developer can call an LLM API, set up a vector store, and connect a few documents, they can probably build the rest.

That logic collapses quickly in enterprise environments.

RAG systems interact with complex data ecosystems. They need to process multiple document formats, enforce user permissions, manage frequent content updates, and deliver responses quickly under production load. They also need to be monitored continuously so teams can understand whether failures are coming from retrieval quality, poor prompt orchestration, stale source data, latency bottlenecks, or model limitations.

No single hire can be expected to own all of that at a high level.

A strong prototype engineer may be excellent at fast experimentation, but enterprise deployment introduces a different set of demands. Production-grade RAG systems require not only technical depth, but also coordination across disciplines. This is why enterprises that over-index on the “full-stack AI unicorn” often face delays, architectural shortcuts, and unstable systems.

The smarter approach is to define the team around the system, not around a fantasy role. A smaller team can absolutely work, but only if the necessary capability areas are deliberately covered.

The Third Mistake: Underestimating Data Engineering

A large number of RAG projects underperform because enterprises underestimate the role of data engineering. They assume the main challenge lies in the model or the retrieval algorithm, when in reality the root problem is often the state of the underlying enterprise data.

RAG performance depends on the quality, structure, freshness, and accessibility of source content. If the content being ingested is outdated, poorly organized, duplicated, inconsistently tagged, or spread across disconnected repositories, the retrieval layer will struggle no matter how advanced the model is.

This becomes especially clear in enterprise settings where information is rarely centralized or clean. Policies may exist in multiple versions. Product documentation may be split across internal portals. Contracts, reports, tickets, manuals, and process documents may all have different formats and ownership patterns. Some may contain structured data, while others are messy PDFs with tables and scanned text.

Before an enterprise can rely on RAG, it has to solve foundational questions such as:

- Which knowledge sources should be included first?

- How often do those sources change?

- What metadata is needed to improve filtering and ranking?

- How will stale or deprecated content be removed from the index?

- How will ingestion failures be detected and fixed?

- How will permissions be mapped into retrieval logic?

These are data engineering questions as much as AI questions. That is why staffing RAG purely through GenAI hiring often leaves teams exposed. Data engineers are not optional support roles in RAG projects. They are central to making the system trustworthy.

The Fourth Mistake: Ignoring Search and Relevance Expertise

RAG pipelines are often discussed in AI terms, but at their core, they also depend heavily on search and relevance engineering. Before the LLM generates anything, the retrieval layer has to surface the right context. If the wrong content is retrieved, answer quality drops immediately, regardless of how capable the model may be.

This is where many enterprises make another costly mistake. They invest heavily in LLM talent and API experimentation but overlook the fact that retrieval quality is its own discipline.

Search relevance work includes understanding how enterprise users phrase questions, how documents should be ranked, how metadata affects filtering, how hybrid search can improve results, and how reranking models can refine the final context passed to the LLM. These are not minor optimization tasks. They are often the difference between an assistant that feels helpful and one that feels unreliable.

This is especially important in industries with dense terminology or highly regulated knowledge environments. In sectors such as healthcare, financial services, legal operations, and industrial manufacturing, small variations in wording can significantly change the meaning of a user query. Generic retrieval setups often fail in these environments because they are not tuned to domain-specific patterns.

Enterprises that ignore relevance expertise often end up blaming the model for what is actually a retrieval problem.

The Fifth Mistake: Building RAG Without LLMOps Thinking

Even when enterprises successfully build a working RAG system, many still fail to operate it effectively. This happens when they focus entirely on initial implementation and ignore the operational layer required to maintain quality over time.

RAG systems are not static. Documents change. Users ask new kinds of questions. Retrieval performance shifts as content grows. Prompt strategies need refinement. Costs can increase as usage expands. Models may behave differently after upgrades. Without a way to monitor and evaluate these variables, teams lose visibility into what the system is actually doing.

This is where LLMOps thinking becomes essential.

A mature RAG deployment needs operational discipline in areas such as:

- Retrieval quality evaluation

- Groundedness and answer accuracy tracking

- Latency and system performance monitoring

- Token usage and cost visibility

- Prompt versioning and experimentation

- Feedback loops from real users

- Incident detection and continuous optimization

Enterprises that skip this layer often end up with RAG systems that appear to work but are impossible to improve systematically. Over time, trust erodes because no one can clearly explain why quality is improving, declining, or fluctuating.

Hiring for RAG without accounting for LLMOps is one of the most overlooked enterprise mistakes in GenAI today.

What Production-Ready RAG Teams Actually Look Like

Enterprises that succeed with RAG rarely depend on one or two generalists alone. Instead, they build cross-functional teams aligned to the real architecture of the system. The exact team size may vary depending on project scope, but the capability map tends to remain consistent.

A production-ready RAG team often includes:

- LLM or AI Engineers who handle orchestration, prompting, model interaction, and answer design

- Data Engineers who build ingestion pipelines, transformation logic, indexing workflows, and document refresh mechanisms

- Backend Engineers who integrate the system into enterprise applications, APIs, and workflows

- Search or Relevance Specialists who improve retrieval quality, ranking logic, and hybrid search performance

- LLMOps or Platform Engineers who monitor performance, optimize cost, manage deployments, and improve reliability over time

- Security and Governance Experts who ensure access control, compliance alignment, and safe handling of enterprise data

Not every enterprise needs all of these roles as separate hires on day one. But every enterprise does need these capabilities represented somewhere in the delivery model. That may happen through internal hiring, cross-functional engineering teams, external specialists, or staff augmentation. What matters is not the org chart title. What matters is whether the system’s critical layers are truly covered.

Why India Is Becoming Important for RAG Staffing

India is becoming an increasingly important market for enterprise RAG staffing because it offers a strong combination of adjacent capabilities that RAG systems depend on. The country has deep talent pools in software engineering, data engineering, cloud infrastructure, backend development, and increasingly, Generative AI delivery.

That combination matters because RAG is not a narrow AI problem. It exists at the intersection of retrieval, data systems, enterprise application engineering, infrastructure, and AI operations. Markets that provide only model-focused talent are often insufficient. Enterprises need ecosystems where multiple engineering disciplines can work together.

India’s expanding Global Capability Center ecosystem is accelerating this shift. More global enterprises are building AI platforms, enterprise search functions, and GenAI delivery teams in India. As a result, the local talent base is becoming more experienced with real production environments rather than just prototypes.

For global companies, this makes India strategically important not just for cost efficiency, but for capability building. However, competition for experienced RAG talent is growing. Enterprises cannot rely on generic hiring methods. They need staffing strategies that accurately identify production-ready skills.

What Enterprises Should Do Differently

The most important step enterprises can take is to redefine the problem correctly. A RAG system is not simply a chatbot with retrieval attached to it. It is a multi-layered enterprise system that depends on data integrity, retrieval relevance, orchestration quality, application engineering, and operational control.

That means hiring plans should begin with architecture, not assumptions.

Instead of opening a vague role for a “RAG expert,” enterprises should identify the exact capabilities their system requires. If ingestion quality is weak, they need stronger data engineering. If answers are irrelevant, they may need retrieval and relevance expertise. If the system works but costs are climbing, LLMOps capability becomes more important. This kind of clarity leads to better hiring decisions and stronger delivery outcomes.

Enterprises also need to evaluate real production experience more rigorously. It is not enough to ask whether a candidate has worked with LangChain, Pinecone, or a particular LLM provider. Teams should ask what the candidate optimized, where the system broke, how they handled stale documents, how they measured retrieval quality, and how they reduced hallucinations in practice. Those answers reveal whether someone understands RAG as a system or merely as a toolkit.

Most importantly, enterprises must shift from hiring isolated talent to building coordinated team structures. That is the real difference between a promising prototype and a dependable enterprise capability.

Conclusion

RAG pipelines have become one of the most important enterprise architectures in Generative AI because they make LLMs more grounded, useful, and context-aware. But they also expose one of the most important truths about enterprise AI adoption: success depends less on access to models and more on engineering discipline.

What many enterprises get wrong is not the potential of RAG. It is the hiring logic behind it.

They hire for buzzwords instead of capabilities. They expect one person to own a system that spans multiple engineering layers. They underinvest in data engineering and search relevance. They treat operations as an afterthought instead of a core requirement. And when performance disappoints, they often blame the model instead of the system design.

The enterprises that get RAG right approach it differently. They understand that retrieval is infrastructure, not decoration. They invest in the right team structure. They treat data quality, relevance tuning, backend integration, and LLMOps as first-class concerns. And they staff accordingly.

That is what turns RAG from a promising experiment into a reliable enterprise capability.

Frequently Asked Questions (FAQ)

Q1. What skills are needed to build a RAG pipeline?

Q2. Why do RAG projects fail in enterprises?

Q3. Do you need a data engineer for a RAG pipeline?

Q4. What roles should an enterprise RAG team include?

Q5. How do enterprises hire for RAG pipelines the right way?

About Author

CEO at gNxt Systems

with 25+ years of expertise, Mr. Anoop Jain delivers complex projects, driving innovation through IT strategies and inspiring teams to achieve milestones in a competitive, technology-driven landscape.