- May 12, 2026

- by Anoop Jain

AI Models Are Shipping Monthly. You’re Still Hiring Quarterly. The Math Doesn’t Work

Let’s count what happened in AI between January and April 2026. Four months. Sixteen weeks.

OpenAI released GPT-5.2, then GPT-5.3-Codex, then GPT-5.4 — three distinct model generations in under ninety days. Google shipped Gemini 3.1 Pro and Flash-Lite. Anthropic launched Claude Opus 4.6 and Sonnet 4.6. xAI dropped Grok 4.20. On the open-source side, Mistral shipped Small 4, Zhipu AI pushed GLM-4.7 to the top of coding benchmarks, and Alibaba released models matching frontier performance at a fraction of the cost.

MCP crossed 97 million monthly installs. The Agentic AI Foundation went live under the Linux Foundation. GPT-5.4 scored 75% on OSWorld — beating human experts at computer use. Agentic workflows moved from conference demos to production infrastructure. The EU AI Act compliance timeline accelerated.

That was four months.

Now let’s count what happened in your hiring pipeline during those same four months. You posted a job description for a senior GenAI engineer. It took three weeks to get HR approval. Two weeks to finalize the listing. Six weeks of sourcing and screening. You found a strong candidate in week twelve. They’re in final-round interviews as you read this. If everything goes perfectly — the offer is accepted, the notice period is reasonable, onboarding goes smoothly — they’ll be writing their first line of production code sometime around month seven.

By month seven, two more model generations will have shipped. The frameworks your job description referenced will have evolved. The agentic patterns you hired for may have been superseded. And your competitor, who augmented three specialists in January, will have shipped two production AI systems and moved on to their next initiative.

The math doesn’t work. It hasn’t worked for over a year. And yet most companies are still running the same playbook, expecting different results.

The Cadence Mismatch That’s Quietly Killing AI Roadmaps

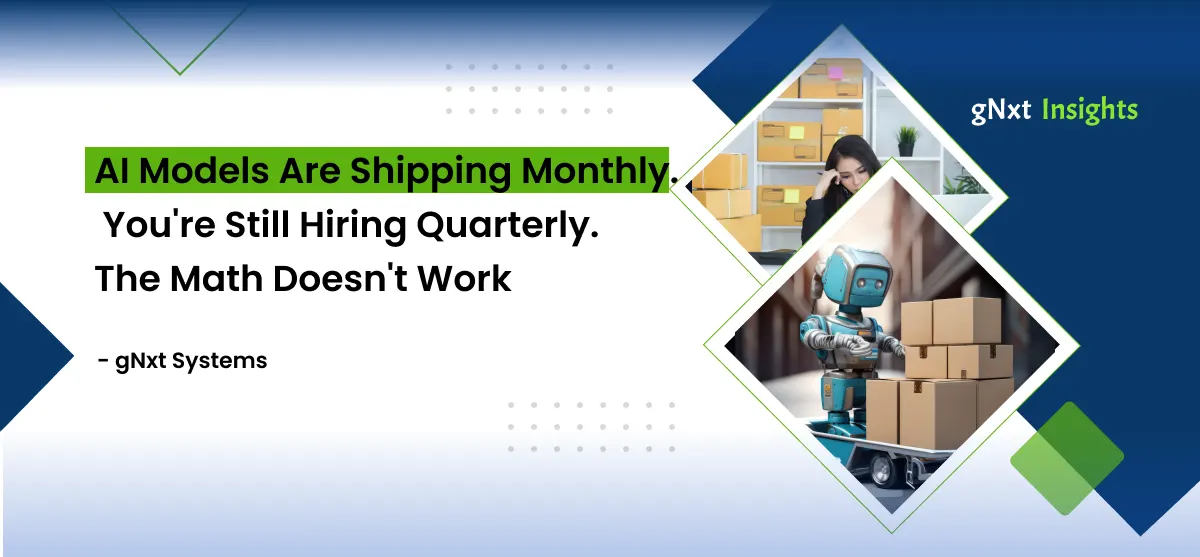

There’s a specific structural problem that most leadership teams haven’t fully internalized. It’s not that AI is moving fast — everyone knows that. It’s that the speed of AI capability evolution and the speed of organizational talent acquisition operate on fundamentally incompatible timescales.

AI capability ships on a weekly-to-monthly cadence. Major model releases every few weeks. Framework updates every month. New architectural patterns — agentic workflows, MCP integration, multi-model orchestration — reaching production maturity in quarters, not years. The frontier doesn’t pause. It doesn’t wait for your budget cycle. It doesn’t care that your recruiter is still sourcing.

Talent acquisition operates on a quarterly-to-biannual cadence. Job descriptions take weeks to approve. Sourcing takes months. Interview loops stretch across weeks. Offers take time to negotiate. Notice periods add more weeks. Onboarding and ramp-up extend the timeline further. From “we need this skill” to “this person is contributing meaningful work,” the realistic timeline is four to six months — and that’s when everything goes smoothly.

The gap between these two cadences isn’t shrinking. It’s widening. Every month, the AI frontier advances. Every month, the hiring pipeline stays roughly the same speed. The cumulative effect is a growing deficit — an expanding distance between what the technology makes possible and what your team can actually execute.

This isn’t a temporary problem caused by a hot job market. It’s a structural mismatch between how AI evolves and how companies staff for it. And no amount of optimization within the traditional hiring model can close the gap, because the model itself operates at the wrong clock speed.

What One Quarter of Delay Actually Costs

The cost of this cadence mismatch isn’t abstract. It compounds in specific, measurable ways that most companies don’t track because they don’t have a line item for “things we should have shipped but couldn’t.”

Consider a mid-market SaaS company that identified an agentic AI opportunity in January 2026 — an intelligent customer onboarding system that uses AI agents to guide new users through setup, configuration, and first-value realization. The business case was clear: reduce time-to-value from fourteen days to three, cut support tickets by 40%, improve retention at the critical 30-day mark.

The company started hiring in January. By April, they hadn’t closed a single offer for the agentic AI architect role. Two candidates declined. One accepted and then rescinded during the notice period. The internal team attempted to prototype with existing skills, but nobody had production experience with multi-agent orchestration or MCP integration. The prototype was underwhelming. The project stalled.

Meanwhile, a competitor with a similar product and similar ambitions took a different approach. They engaged two augmented specialists in January — an agentic AI architect and an MCP integration engineer. By late February, the architecture was designed. By late March, the system was in beta. By mid-April, it was in production, onboarding real customers, generating real retention improvements, and creating a real competitive moat.

One quarter. Same opportunity. Opposite outcomes. The difference wasn’t strategy, budget, or vision. It was the speed at which each company could put the right skills on the problem.

Multiply this scenario across every AI initiative on your roadmap — fraud detection, intelligent automation, GenAI-powered search, predictive analytics, compliance systems — and the cumulative cost of quarterly-speed hiring against monthly-speed technology becomes staggering. It’s not just lost features. It’s lost market position, lost revenue, lost customer trust, and lost competitive differentiation that becomes exponentially harder to recover.

The Job Description Obsolescence Problem

There’s a secondary pathology within the cadence mismatch that deserves its own examination, because it’s one of the least discussed and most damaging dynamics in AI hiring.

Job descriptions become obsolete during the hiring process itself.

In January 2026, a company posts a listing for a GenAI engineer with experience in RAG pipelines and LLM integration. Reasonable requirements for January. But by the time a candidate is identified, interviewed, offered, and onboarded — let’s say June — the landscape has shifted. GPT-5.4 has shipped with unified coding and agentic capabilities. MCP has become foundational infrastructure. The company’s actual needs have evolved from “build a RAG chatbot” to “build an agentic system that connects to our enterprise tools via MCP and operates autonomously.”

The person you hired in January’s job market may not be the person you need in June’s technology landscape. Not because they’re incompetent — they might be excellent. But because the specific skill profile the market demands has shifted faster than your hiring process moved.

This creates a perverse cycle. You hire for what you needed when you started looking, not for what you need when the person arrives. Then you discover the gap and start another hiring cycle for the skills you actually need now. Four to six more months. By which time the landscape has shifted again.

Traditional hiring treats roles as static. AI treats everything as dynamic. The collision between these two assumptions is where roadmaps go to die.

Why “Hire Faster” Is the Wrong Answer

The intuitive response to the cadence mismatch is to speed up hiring. Streamline the process. Reduce interview rounds. Offer faster. Onboard faster.

These are reasonable operational improvements. They are also completely inadequate for the scale of the problem.

Even if you cut your hiring cycle in half — from six months to three — you’re still operating at a quarterly cadence in a monthly world. You’ve improved from three model generations behind to one and a half model generations behind. Better, but not enough.

And the constraints on hiring speed aren’t just process inefficiencies you can optimize away. They’re structural realities of the talent market.

There aren’t enough qualified candidates. The global AI talent shortage sits at 3.2 open roles for every qualified candidate. For emerging specializations like agentic AI architecture and MCP integration, the ratio is dramatically worse. You can’t hire faster when the candidates don’t exist in sufficient numbers.

The best candidates aren’t looking. The engineers with the most current, most valuable skills are already employed — usually at frontier AI companies or in high-demand consulting arrangements. They’re not browsing job boards. They’re not responding to cold outreach from recruiters who can’t distinguish MCP from MQTT.

Notice periods are non-negotiable. Even when you find and close a great candidate, most senior engineers have 30-to-90-day notice periods. You can’t optimize this away. It’s contractual.

The right answer isn’t “hire faster.” It’s “decouple your capability timeline from your hiring timeline entirely.” Staff for the speed of the technology, not the speed of recruitment.

The Augmented Cadence: Matching Talent Speed to Technology Speed

AI staff augmentation solves the cadence mismatch by operating on the same timescale as the technology itself.

When a new frontier model ships with capabilities your roadmap can exploit, you don’t post a job listing. You call your augmentation partner. Within one to two weeks, a specialist who’s already building with those capabilities — at other companies, in production, right now — is onboarded into your team and executing.

When the agentic AI landscape shifts and your current initiative needs MCP integration that nobody on your team has built before, you don’t start a three-month recruiting process. You embed an MCP engineer who’s deployed servers at three other enterprises and knows every authentication, governance, and scaling challenge before they encounter it.

When a regulatory deadline accelerates and you need AI governance expertise in six weeks, not six months, you don’t hope your data scientists can self-teach compliance frameworks from blog posts. You bring in a specialist who’s already navigated the EU AI Act requirements at other companies in your industry.

The augmented model doesn’t just move faster. It matches the cadence of the technology itself. Monthly model releases? Monthly capability to build with them. Quarterly framework shifts? Quarterly access to engineers who’ve mastered them. This is the only workforce model that keeps pace with how AI actually evolves.

And it compounds over time. Every augmented engagement includes knowledge transfer — pair programming, documentation, workshops, structured handoffs. So your internal team isn’t just watching. They’re learning. Each engagement makes the permanent team more capable, more current, and more self-sufficient. After three or four augmented sprints, your internal engineers have absorbed skills that would have taken them years to develop in isolation.

The augmented cadence doesn’t replace internal teams. It accelerates them.

What the Right Operating Model Looks Like

The companies that have solved the cadence mismatch — the ones consistently shipping AI at the speed the technology enables — share a common operating model. It’s not complicated. But it requires abandoning the assumption that every capability must be permanently staffed.

A lean, permanent core team — typically three to five engineers — owns strategy, institutional knowledge, data architecture, and long-term technical vision. These are the people who understand your business deeply enough to translate AI capabilities into business value. They set direction. They own governance. They provide the continuity that holds the roadmap together across initiatives.

A rotating augmented specialist layer that flexes with the technology cadence. When a new model generation creates new product opportunities, augmented GenAI engineers join the team to exploit them. When the initiative shifts to production deployment, an augmented MLOps specialist builds the pipeline. When compliance deadlines approach, a governance engineer embeds for the sprint. When the initiative completes, they transfer knowledge and roll off.

A quarterly capability review where the core team and their augmentation partner assess what’s changed in the technology landscape, what new skills are needed for the next roadmap phase, and what internal capabilities have matured enough to bring in-house permanently.

This model does three things the traditional approach can’t. It keeps your team current with the technology frontier without requiring you to predict six months in advance which specific skills you’ll need. It converts massive fixed payroll costs into targeted variable investments that map to actual project phases. And it builds internal capability over time through knowledge transfer, so the core team gets stronger with every engagement.

It’s not a workaround. It’s the operating model designed for how AI actually works in 2026.

The Companies That Get This Are Already Winning

This isn’t a theoretical argument. The evidence is in the shipping logs.

The fintech that deployed fraud detection in ninety days while its competitor spent those same ninety days still interviewing candidates. The SaaS company that launched an agentic customer onboarding system a full quarter before the next nearest competitor because they augmented instead of hired. The healthcare company that met its EU AI Act compliance deadline with two months to spare because it embedded a governance specialist instead of waiting to find one on the open market.

In every case, the technology was available to everyone. The strategy was similar across competitors. The difference was the speed at which specialized talent was put on the problem.

That’s the math. AI ships monthly. Your hiring cycle runs quarterly at best. The delta between those two speeds isn’t a minor inefficiency. It’s the difference between leading your market and chasing it.

The Decision Is Simpler Than You Think

You don’t need to overhaul your entire talent strategy overnight. You don’t need to fire your recruiters or abandon full-time hiring. You need to do one thing: stop assuming that permanent headcount is the only path to AI capability.

For the roles that require deep institutional knowledge and long-term strategic ownership — keep hiring for those. Your AI strategy lead. Your senior data architects. Your engineering managers.

For the specialized, frontier-speed skills that evolve faster than any hiring process can match — augment. Agentic AI architects. MCP integration engineers. MLOps specialists. Fine-tuning experts. AI governance engineers. The roles where the technology moves monthly and the talent pool is measured in hundreds.

Own the core. Augment the frontier. Ship at the speed of the technology, not the speed of HR.

The math is simple. The decision should be too.

References & Sources

- OpenAI — “Introducing GPT-5.4” (March 5, 2026) — https://openai.com/index/introducing-gpt-5-4/

- Mean CEO Blog — “New AI Model Releases News | April 2026 (Startup Edition)” (April 2026) — https://blog.mean.ceo/new-ai-model-releases-news-april-2026/

- AI2Work — “Model Context Protocol Hits 97M Installs as Linux Foundation Takes Over” (April 2026) — https://ai2.work/blog/model-context-protocol-hits-97m-installs-as-linux-foundation-takes-over

- Second Talent — “Top 50+ Global AI Talent Shortage Statistics 2026” (April 2026) — https://www.secondtalent.com/resources/global-ai-talent-shortage-statistics/

- Deloitte Insights — “Agentic AI Strategy” (February 2026) — https://www.deloitte.com/us/en/insights/topics/technology-management/tech-trends/2026/agentic-ai-strategy.html

Frequently Asked Questions (FAQs)

Q1. Why is AI hiring so much slower than AI technology development?

Q2. How does AI staff augmentation solve the hiring speed problem?

Q3. How many AI models shipped in the first quarter of 2026?

Q4. Does augmentation replace the need for full-time AI hires?

Q5. What's the risk of waiting to build AI capability until we can hire full-time?

About Author

CEO at gNxt Systems

with 25+ years of expertise, Mr. Anoop Jain delivers complex projects, driving innovation through IT strategies and inspiring teams to achieve milestones in a competitive, technology-driven landscape.