- April 20, 2026

- by Anoop Jain

Your AI Team Is Too Expensive, Too Slow, and Too Small. Let’s Fix All Three

A Diagnosis Nobody Wants to Hear

Let’s skip the pleasantries and get to the uncomfortable truth.

Your AI initiative is underperforming. Not because the technology isn’t ready — it is. Not because your leadership lacks vision — they probably don’t. It’s underperforming because the team you’ve built to execute it is caught in a trap that no amount of motivational Slack messages or hackathon Fridays can fix.

The trap has three jaws, and they’re all biting at the same time.

Your AI team is too expensive. You’re spending $500K to $1 million per year on a handful of specialists, and you’re still not shipping fast enough to justify the investment. Every quarter, someone in finance squints at the AI line item and asks what exactly they’re getting for the money.

Your AI team is too slow. The average time to hire a senior ML engineer in 2026 sits between four and six months. By the time your new hire has onboarded, ramped up, and started contributing meaningfully, the frameworks they were hired for might already be outdated. Meanwhile, your roadmap gathers dust.

Your AI team is too small. Even after months of hiring, you still don’t have the right specializations. You have a data scientist who’s great at modeling but has never deployed anything to production. You have an engineer who can build APIs in their sleep but doesn’t understand how to evaluate LLM outputs. The team has gaps, and those gaps are silently killing your velocity.

These three problems aren’t separate. They feed each other. The expense makes it impossible to hire more people, the slowness makes existing people feel overwhelmed, and the size limitations force everyone to work outside their expertise. It’s a vicious cycle that compounds with every passing quarter.

But here’s the good news: all three are fixable. Not in twelve months. Not after your next funding round. Now.

Problem #1: Too Expensive

Let’s lay out the math that most companies avoid doing honestly.

A single senior AI engineer in the United States commands a base salary of $150,000 to $200,000. Add a data scientist, an MLOps engineer, and a product manager, and you’re looking at $500,000 to $1 million in annual salary costs — before any infrastructure or tooling budget. That’s just base compensation. Layer on benefits, equity, recruiting fees, office overhead, management time, and the invisible cost of the months it took to fill each role, and the actual number is significantly higher.

For mid-market companies — the ones with $30M to $200M in revenue, trying to integrate AI into their products and operations — this cost structure is brutal. You’re essentially betting a million dollars a year that a small group of people will deliver transformational results, while simultaneously knowing that the team isn’t big enough or specialized enough to cover everything on the roadmap.

And here’s what nobody says out loud: a significant portion of that spend is wasted. Not because the people are incompetent — usually, they’re excellent. But because they’re being asked to do things outside their core expertise. Your ML engineer is debugging deployment pipelines because you don’t have an MLOps specialist. Your data scientist is writing production code because you can’t justify another engineering headcount. Your team lead is spending 40% of their time interviewing candidates instead of reviewing architectures.

You’re paying premium salaries for premium talent, and then burning that talent on work that doesn’t match their skills. It’s like buying a Formula 1 car and using it to deliver groceries.

The fix: Stop trying to own every capability permanently. Maintain a lean, high-impact core team — the people who understand your business, your data, and your long-term AI strategy. Then augment around that core with specialists who bring the exact skills you need, for exactly the duration you need them.

A three-month AI staff augmentation engagement with two to three specialists typically costs $150,000 to $340,000. That sounds expensive until you compare it to twelve months of salary for full-time hires who take four months to find and two months to ramp up — and who you’re paying year-round even during periods when their specific skills aren’t fully utilized.

The augmented model converts a massive fixed cost into a targeted variable cost. You’re paying for output, not for attendance.

Problem #2: Too Slow

Speed in AI isn’t just about how fast your engineers write code. It’s about the entire cycle: from identifying a capability gap to having the right specialist working on the problem.

Under a traditional hiring model, that cycle looks like this: two to four weeks writing and approving a job description, four to eight weeks sourcing and screening candidates, two to four weeks of technical interviews and panel reviews, one to three weeks of offer negotiation and acceptance, two to eight weeks waiting out notice periods, and another four to eight weeks of onboarding and ramp-up. Add it all up, and you’re looking at four to six months from “we need this skill” to “this person is contributing meaningfully.”

In AI, four to six months is a geological epoch. The model architectures, tooling, and best practices that were cutting-edge when you posted the job listing may be obsolete by the time your hire starts their first sprint. A job description written for someone who can build with a specific framework might be irrelevant by the time the offer letter is signed because the ecosystem has shifted underneath it.

This isn’t hypothetical. It’s happening constantly. Teams that began hiring for GPT-4 integration specialists in late 2024 are now discovering that the skills they screened for don’t map cleanly to the agentic AI systems their business actually needs in 2026. The GenAI landscape moves faster than any hiring process was designed to accommodate.

And then there’s the compounding cost of delay. Every month your fraud detection model isn’t in production, you’re absorbing losses that the model would have prevented. Every quarter your AI-powered analytics dashboard remains a mockup, your sales team is pitching with outdated capabilities. Every sprint your overloaded team spends fighting fires instead of building new features, your competitors gain ground.

The fix: Decouple your capability timeline from your hiring timeline. AI staff augmentation lets you place pre-vetted, production-experienced specialists on your team within one to two weeks — not one to two quarters. These aren’t random contractors hoping to learn on the job. They’re engineers who’ve built the exact systems you’re trying to build, at companies similar to yours, and can start contributing meaningful code within days of onboarding.

The speed advantage isn’t marginal. It’s structural. You compress a six-month hiring cycle into a two-week placement cycle, and you eliminate the ramp-up period almost entirely because you’re engaging someone who’s already done the work before.

Problem #3: Too Small

Even companies that have successfully hired AI talent almost always end up with a team that’s too narrow. Not too few people necessarily — but too few specializations.

This is the hidden problem that doesn’t show up in headcount reports but shows up everywhere in delivery metrics. Your team has three ML engineers but no one who’s built a production deployment pipeline. You have a brilliant researcher who can design novel architectures but can’t write production-grade code. You have engineers who understand traditional software systems but have never worked with vector databases, embedding models, or retrieval-augmented generation.

The reason is straightforward: AI in 2026 isn’t one discipline. It’s a cluster of rapidly diverging specializations, each requiring deep expertise that takes years to develop. The skills needed to fine-tune a foundation model are fundamentally different from the skills needed to build an MLOps pipeline, which are fundamentally different from the skills needed to design an agentic AI system, which are fundamentally different from the skills needed to navigate AI governance and compliance.

No team of four or five people — no matter how talented — can cover all of these bases. And the more you try to make generalists do specialist work, the more you get mediocre results across the board instead of excellent results in the areas that actually matter.

This creates a pattern that engineering leaders know painfully well: the team is perpetually blocked on something that nobody is truly qualified to do. The data scientists build a model that works beautifully in a notebook, but it sits there for months because nobody knows how to get it into production reliably. The production engineers deploy a model but can’t figure out why it’s drifting, because nobody has the monitoring and evaluation expertise. Everybody is busy. Nobody is blocked on their core work. But the overall initiative barely moves.

The fix: Stop trying to build a team that can do everything and start building a team that can do the most important things — augmented by specialists who fill the gaps as they appear.

This is where AI and ML staff augmentation changes the game completely. Instead of hiring a full-time MLOps engineer you’ll need intensely for three months and sporadically after that, you bring in an MLOps specialist for a defined engagement. Instead of expecting your data scientists to suddenly become GenAI architects, you augment with someone who’s built RAG systems and agentic workflows at three other companies. Instead of hoping your backend engineers can figure out AI compliance before the EU AI Act deadline hits, you embed a governance specialist who’s already navigated it.

Your team doesn’t need to be bigger. It needs to be more complete — on demand, for the specific challenges you’re facing right now.

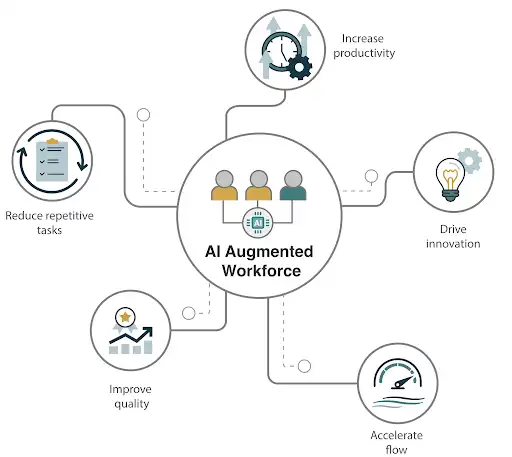

The Unified Fix: The Augmented AI Team Model

The three problems — cost, speed, and size — all share one root cause: the assumption that every AI capability your company needs must be permanently staffed.

That assumption made sense in a world where skills were stable, talent was accessible, and technology evolved at a pace that hiring processes could match. None of those conditions exist in 2026.

The augmented AI team model replaces that assumption with a more rational operating principle: own the core, augment the rest.

The core is the small, permanent team that holds your institutional knowledge, data understanding, stakeholder relationships, and long-term AI strategy. These are the people who know your business inside out. They set direction. They own governance. They’re the thread of continuity that holds the roadmap together.

The augmented layer is the rotating set of specialists who bring specific, deep expertise for defined phases of work. A fine-tuning specialist for model optimization. An MLOps engineer to build the deployment pipeline. A GenAI architect for a three-month product sprint. A compliance engineer before a regulatory milestone.

Together, the core and the augmented layer create a team that is cheaper than a full in-house build (because you’re only paying for specialized skills when you need them), faster than traditional hiring (because augmented specialists can be placed in days and start contributing in weeks), and more complete than any fixed team could be (because you access the full breadth of AI specializations without permanently staffing each one).

This isn’t a theoretical framework. It’s how the most effective AI organizations in the world are operating right now.

What This Looks Like in Practice

Let’s make this concrete. Imagine a company with an AI roadmap that includes three initiatives over the next twelve months: deploying an ML-powered demand forecasting model, building a GenAI-powered internal knowledge assistant, and preparing all AI systems for regulatory compliance.

The pure in-house approach: You’d need to hire a senior ML engineer, a GenAI/LLM specialist, an MLOps engineer, and an AI governance specialist — minimum four new headcount, costing roughly $800K to $1.2M per year in fully-loaded compensation. Realistic hiring timeline: five to eight months before the team is complete and ramped. That’s assuming you successfully close every offer on the first attempt, which — given the competitive market — is optimistic.

The augmented approach: You maintain your existing core team of two to three engineers who understand the business and data. For initiative one, you augment with an ML engineer and an MLOps specialist for a 90-day sprint. For initiative two, you augment with a GenAI architect for 60 days. For initiative three, you bring in a compliance specialist for 45 days. Total augmentation cost: roughly $350K to $500K. Timeline to full delivery: eight to ten months, with the first initiative live within 90 days.

The augmented model delivers all three initiatives for roughly half the cost, gets the first system into production six months earlier, and doesn’t saddle you with $1M+ in permanent payroll for roles that won’t be fully utilized year-round.

After the engagements, if you discover that one of those specializations is genuinely needed full-time — great, hire for it. But now you’re hiring from a position of clarity, not desperation. You know exactly what the role entails, what success looks like, and what skills actually matter. No more guessing.

The Objections (And Why They Don’t Hold Up)

Every engineering leader considering this model has the same three concerns. Let’s address them directly.

“Augmented engineers won’t understand our business.” Correct — on day one. But the best augmented specialists are selected specifically because they’ve worked in your industry before. They’ve seen your type of data, your type of systems, and your type of challenges at other companies. The ramp-up on business context takes one to two weeks, not months. And your core team — the people who live and breathe the business — are there to provide that context continuously.

“We’ll lose IP and institutional knowledge.” Every well-structured augmentation engagement includes knowledge transfer as a formal deliverable. Documentation, runbooks, pair programming, workshops — these aren’t afterthoughts. They’re built into the engagement from day one. When the augmented specialist rolls off, your internal team should be capable of maintaining and extending everything that was built. If they can’t, the engagement has failed. At gNxt Systems, we measure success by whether your team is stronger after we leave, not by whether you need us to stay.

“Staff augmentation is just fancy outsourcing.” It’s fundamentally different. In outsourcing, you hand a project to an external team that manages it independently. In augmentation, external specialists are embedded directly into your team, working under your management, in your codebase, on your tools, following your processes. They join your standups. They submit pull requests through your code review workflow. They’re functionally indistinguishable from internal team members — except they bring skills your internal team doesn’t have.

The Bottom Line

Your AI team isn’t broken because you hired the wrong people. It’s broken because you’re using a workforce model designed for a stable, slow-moving talent market — and applying it to the fastest-moving technology domain in human history.

The fix isn’t to hire more. It isn’t to hire faster. And it isn’t to throw more budget at the problem.

The fix is to rethink what “your team” means.

Own the core. Augment the rest. Ship faster. Spend less. Cover more ground.

That’s not a compromise. That’s a competitive advantage.

References & Sources

- Second Talent — “Top 50+ Global AI Talent Shortage Statistics 2026” (April 2026) — https://www.secondtalent.com/resources/global-ai-talent-shortage-statistics/

- Grapes Tech Solutions — “AI Development Cost in 2026: Full Breakdown by Project Type, Team & Timeline” (April 2026) — https://www.grapestechsolutions.com/blog/ai-development-cost-2026/

- USM Business Systems — “Best AI Project Cost Estimation 2026 Pricing Breakdown” (March 2026) — https://usmsystems.com/ai-project-cost-estimation/

- Rise — “AI Talent Salary Report 2026” (February 2026) — https://www.riseworks.io/blog/ai-talent-salary-report-2025

- SPECTRAFORCE — “AI in Hiring 2026: Five Roles Driving Demand and the Supply Problem Behind Them” (April 2026) — https://spectraforce.com/blog/technology-ai-in-hiring/ai-hiring-trends-2026/

- Gartner — “AI Revolution and Cost Pressures Are Two Forces Driving the Top Four Trends for Talent Acquisition in 2026” (October 2025) — https://www.gartner.com/en/newsroom/press-releases/2025-10-07-gartner-says-ai-revolution-and-cost-pressures-are-two-forces-driving-the-top-four-trends-for-talent-acquisition-in-2026

- Overture Partners — “How to Hire Generative AI Engineers on Contract in 2026” (March 2026) — https://overturepartners.com/it-staffing-resources/how-to-hire-generative-ai-engineers-on-contract-in-2026

- Gloat — “AI Skills Demand in the U.S. Job Market (2026)” (March 2026) — https://gloat.com/blog/ai-skills-demand/

- nCube — “AI Staff Augmentation — AI Talent to Scale Your Team Fast” (December 2025) — https://ncube.com/ai-staff-augmentation-services

- Mind IT Systems — “AI Staff Augmentation: The Game-Changer for Successful AI Projects” (May 2025) — https://minditsystems.com/ai-staff-augmentation-for-successful-projects/

Frequently Asked Questions (FAQs)

Q1. How much does it cost to build a full in-house AI team in 2026?

Q2. Why is hiring AI engineers so slow in 2026?

Q3. What is the difference between AI staff augmentation and AI outsourcing?

Q4. How do I know which AI roles to keep in-house and which to augment?

Q5. How quickly can an augmented AI specialist start contributing to my team?

About Author

CEO at gNxt Systems

with 25+ years of expertise, Mr. Anoop Jain delivers complex projects, driving innovation through IT strategies and inspiring teams to achieve milestones in a competitive, technology-driven landscape.